Throughout my 15-year career as a software engineer, I’ve spent much of my time working with open-source software. Open-source software code is publicly visible and modifiable. Open source code sits in nearly all software we use today. It is based on the premise of reusing solutions to problems that need to be solved regularly. In our industry, a good example is Leaflet, a library used by many web applications, including the PlaceChangers platform, to draw interactive maps.

Changes to open source software are made transparently in an ongoing discussion between engineers and users. Open-source software works as an approach to software development precisely because of the software systems and associated best practices that support it, broadly comprising three key components:

- An issue tracker, where bugs and feature requests are logged, drives future improvements.

- A version control system that maintains a history of changes to the software’s source code over time.

- A suite of automated tests checks any incoming code changes for correctness.

The key best practices that tie these three systems together are the “pull request” - in which a solution that addresses an issue in the tracker is presented in the form of modifications to the existing codebase - and the “code review” - in which others review the proposed modifications, and any problems addressed, before the modifications are accepted and the codebase changed.

The application process for a planning application is not unlike an open-source pull request. Stay with me on this one: a proposed modification is submitted for review, submitted to public scrutiny, required to pass several tests, and, finally, “merged”. They are not the same, but they have some key aspects in common when scrutinising and taking applications transparently.

In this article, I discuss the lack of standard software systems, and associated best practices, in planning and outline how open source software gives us a picture of what such systems and practices might look like.

Where are we now: parameter plans and local plans

Now, we are at a point in the history of British town planning where we’re seeing a move from a discretionary system toward a codification of planning policies. Since the release of Planning for the Future there’s been a lot of talk about design codes and local plans. In January 2021, MHCLG released their proposed National Model Design Code (NMDC), authored by URBED, in a project headed up by David Rudlin, which will be piloted by several local authorities in “pathfinder projects” later this year.

The NMDC provides an outline of the kind of model against which proposed developments could be checked objectively without interpreting policy documents. As long as the parameters are well-chosen, the outcome of this move should be a substantial de-risking of the planning process and, of course, better urban design in reality.

Since the publication of the planning white paper, Laura Alvarez, with her work on the Nottingham City Council’s Design Quality Framework, has emerged as a champion of, and authority on, the implementation and application of design coding in planning approvals in the UK. Nottingham City Council’s approach to planning is proactive and innovative and provides a picture of what Planning for the Future aims to achieve.

The council has invested heavily in expertise for resourcing the pre-application stage. Among other things, they’ve introduced an independent Design Review Panel composed of specialists. Planning applicants are actively encouraged to engage in the pre-application process, which always involves a Design Issues Team. The council offers applicants support for collaborative work, design workshops and co-design approaches.

Of course, this is still a manual, document-driven process and a costly one. Nevertheless, it gets results. Applications that undertake pre-application consultation in Nottingham take ten weeks less on average to get planning approval. They result in eleven fewer planning conditions than applications that don’t engage in the pre-application process. Meanwhile, the number of refused applications in Nottingham has dropped from 5% to nearly 0% since 2016.

Planning is a design discipline, and, as studies have shown, there is a lack of expertise in most councils regarding design guidance. Many councils are overstretched, and investment in knowledge of the kind undertaken by Nottingham City Council will need to be supported by the national government as part of planning reforms.

Looking at it from a background in software engineering, it is clear to me that planning is also an engineering discipline - a field of expertise with a set of practices according to which it can be done more or less correctly. It makes sense for the practice to have a technological underpinning. Indeed, this premise sits behind much of what is to be found in the planning white paper.

I notice that many of the diagrams in the NMDC look a lot like parameter plans. A parameter plan is an outline of a proposed development expressed in terms of high level parameters, e.g. building heights, density, land uses, site access points, travel volumes, etc. (you can see an example of parameter plans here). Built environment professionals reading this article will recognise the term as referring to the design detail submitted in an outline planning application.

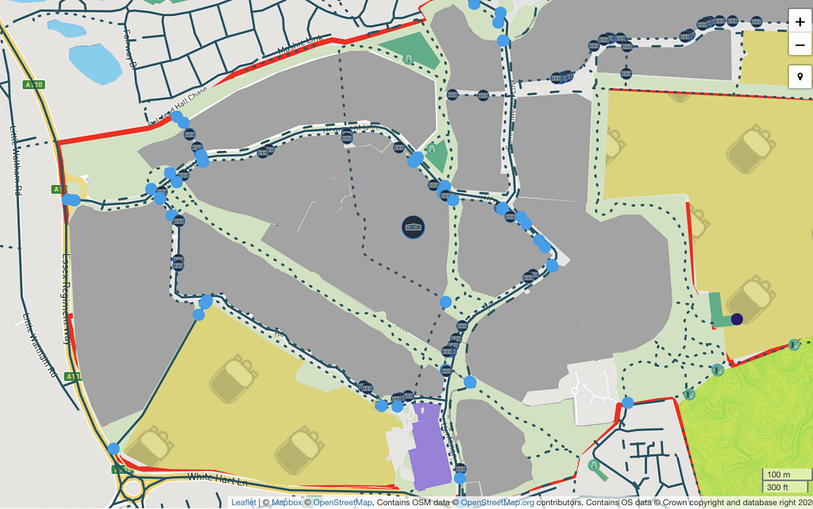

This image shows an interactive site plan on the PlaceChangers platform with focus on land uses and access. A digital representation of land uses and connectivity allows for automatic checking of walking access to open spaces, for instance.

The challenge posed by the planning white paper is the matching of planning guidance parameters with submissions by planning applicants. If, at this level, the planning policy and the planning application take fundamentally the same form, then it’s reasonable to suggest that the one ought to be able to be checked against the other. At least on the parameters that are selected, they either match or they don’t.

The key to rising to this challenge would be a common language or open format that allows this matching to be done. Many local authorities already publish their local plans, and their strategic allocations, in GIS formats on online maps. However, there is no existing standard for this format, and the existing data ecosystem, as rich as it may be, is chaotic and unorganised. These datasets cannot be interpreted consistently and they contain no standard link back to a history of design decisions over time.

Opportunities: open processes

As Jonathan Easton, a barrister practicing in the field of town and country planning, stated in a recent Twitter post about the experience of wading through 1000s of documents for a recent planning application, “Digital is great if it's properly accessible and fosters engagement. Otherwise it’s pretty crap.” Indeed, Graeme Moore of Oldham Council confirmed that “It’s no easier [...] from the other side!” If a planning law practitioner and a metropolitan borough councillor struggle with the existing planning software systems, what chance do the public have?

In 2014, the Creative Exchange partnered with Liverpool City Council and Red Ninja to build a mobile app called Open Planning, a prototype for a new software-driven public consultation approach that makes planning applications conversational, giving members of the public geospatial context for planning conversations. Their rationale was straightforward: “The planning system is a public mechanism that manages the use and development of land and buildings, shaping the built environment in which we all live.”

Indeed, this is the rationale behind the planning white paper’s aim to “move the democracy forward” by enhancing public consultation during the development of local plans. The consultation stage for local plans would be the time for residents and other interested parties to submit issues. The local planning authority would, at the end of that stage, consolidate the suggestions into a local plan, which would be digitised in a series of maps available to the public for review.

Key to the implementation of the Open Planning app was a back-end system based on “scraping”, a process in which human-readable documents are converted into machine-readable data formats. Populating databases this way is an immensely difficult process prone to fragility. Imagine if it weren’t required. As Nottingham City Council’s approach demonstrates, key to streamlining planning processes is, first of all, getting the stakeholders - local government, developers and the public - looking at the same plan.

Key to unlocking opportunities for automatic code checking is in agreeing on how parameters in planning applications and local plans can be described in digital formats.

In 2015, Sebastian Weise, who had worked on Open Planning as part of his PhD at Lancaster University, went on to build what would become the PlaceChangers platform, which is now used to support consultations on development master plans. In the past 15 months, with funding from InnovateUK, we’ve extended the platform with a new data tool, Site Insights, which is based on open datasets and open source software. A large part of my effort, in my role as CTO, is in bridging the gap between data we have on sites across the country and the data that comes from parameter masterplans.

Let’s take an example where this works in practice. Inside Site Insights we do active travel modelling. The transport model is based on the existing network of streets, footpaths and cycle paths from OpenStreetMap. When the user modifies the paths for a new site layout, the tool calculates how their changes affect the reachability of various land uses from housing. Reachability is a core part of the 20-minute neighbourhood principle. Many councils have requirements for green space accessibility, which is a key parameter that then determines developer contribution payments. We’ve written about the 20-minute neighbourhood here.

For open and green space, the tool benchmarks the proposed design against established space standards. For example, an initial pilot for the new tool, the Beaulieu master plan by Countryside plc in Essex, was benchmarked against the Chelmsford Open Space Study. I find this functionality, which allows checking design proposals against agreed standards, really exciting, because the proposed design either passes the automated test or it does not. It’s a glimpse of what a full featured planning technology platform could look like.

Where we are going: automated code checking

Let me start with a personal experience. In order to get to the local shops, I have to walk along a footpath, under the metro line, which is railed off on one side and walled off on the other. The footpath is less than two metres wide. While this was not ideal in the years before 2020, in the age of COVID19 it has made social distancing impossible, and wearing a mask for this journey is essential, even if it’s not mandatory.

This is not a trivial issue to fix. Nevertheless, it would be nice to know it had been logged in a system. Indeed, in my home town, Canberra, the ACT Government has developed such an issue tracking system. This is a useful first step, but we can go further.

In a software development system, the issue would be written down in the form of a user story. In this case, it might look something like this:

As a resident of the neighbourhood at [postcode area], I want to walk from my house to the shops at [address] without having to pass other footpath users at a distance of less than two metres.

In an equivalent planning system, a city planner would write the user story as an automated test. Any proposed development would then be subject to the test suite. It wouldn’t even be reviewed as long as the tests didn’t pass.

Internationally, automated code-checking has already been implemented, more or less successfully, on the scale of individual buildings, in a number of places. In the building information management (BIM) community, these efforts have joined up to become buildingSMART, who have published a set of data standards known as the Industry Foundation Classes. At building level, companies such as NBS have digitised the specifications of a large number of construction products, making code-checking feasible. Building codes also tend to be much more explicit about requirements than codes for urban design.

In 2018, buildingSMART put out a call for participation in the development of an IFC for Site, Landscape and Urban Planning. Since that time, buildingSMART have published IFC4.3 RC1, which aims “to extend the IFC schema to cover the description of infrastructure constructions,” particularly with respect to transport infrastructure. Assuredly, this is a welcome addition, but transport infrastructure isn’t the same as urban design. The situation is further frustrated by an ongoing disconnect between the BIM community, which created IFC, and local government, who publish planning codes in geospatial formats - BIM is not GIS.

A common data environment (the architectural equivalent of a version control system) concerns itself primarily with BIM, so, for now, code checking parameter plans will continue to be a manual process. Moreover, there is no standard format for a CDE, and they are, of course, not visible to the public.

To be clear, I am certain that automated processes will never fully replace planning. Code-checking is not a substitute for design expertise. Moreover, consultation and collaboration are human processes that inform better decisions and generate the buy-in necessary to make communities work. Nevertheless, it is possible to imagine an open system that supports these processes with clear evidence, increasing transparency and changing the culture of resistance to development that exists in the opaque planning system today. This system would be based on open standards, demonstrating agreed compliance and conformance to a framework of policies which could be publicly scrutinised.

Conclusions: #plantech

You may have seen the #plantech hashtag on Twitter (or LinkedIn) and wondered what it meant. Plantech, a subset of proptech, is a nascent field in the IT industry. The UK is doing some of the most important work in the field, and has produced some of the most promising early firms, including PlaceChangers. Indeed, public and non-governmental organisations like the Connected Places Catapult and IC3 at Northumbria University are undertaking intensive work to use innovative technology to break down barriers in planning and placemaking.

National government has a role to play in investing in local planning authorities and regulating data standards. In the private sector, meanwhile, we can help by building the tools that tie these elements together. There are a couple of pieces that are presently missing from the planning software ecosystem:

- An issue tracker: any member of the public should be able to find, for their local authority, a single point of entry for planning, where they can make requests and see what is currently planned or under discussion

- A version tracker: any member of the public should be able to find, for their local authority, a history of planning decisions made, when, by whom and, most importantly, why

- A code tracker: any member of the public should be able to find, for their local authority, a detailed and explorable summary of the local plan, along with proof that planning decisions have conformed to it

Indeed, the above will be valuable for applicants for planning applications, too.

Here in the UK, we have the opportunity to think big in this space, and the country stands to lead the world on the planning technology front. At PlaceChangers, we stand firmly on that front, and work, alongside local governments, developers, research institutions and the public, to build a suite of best-in-class digital tools that give those who share our ambitions what they need to build thriving places together.

Get started with powerful interactive planning consultations. Arrange a free demo to learn more.